How do you “train” an AI to do something?

If you haven’t read the previous post, you might want to do so now.

So, once you have a neural net configured, how do you decide what weights to assign to all of those connections? This is the process known as “training” the model.

Why do they call it “training”?

At the highest level, training an AI is a bit like training a person. You show them some examples of how the work is done, and you let them do the work under supervision, and correct them when they get it wrong. Eventually, they learn to do it “well enough”, and you let them work unsupervised.

How do you train an AI?

This can get very complicated, but here’s the simplest way to do it:

- Start with a neural net with completely-random weights.

- Gather a set of inputs, and their expected outputs. This is your “training set”.

- Run each of the training inputs through the net. Presumably, most/all of them will generate the “wrong” output.

- Generate a “score” for how badly the neural net did at this set of tasks.

- Adjust the weights in a way that reduces the overall error generated by the training set.

Simple enough, right? But step 5 is ridiculously computationally-expensive. Each time you run a single input through the net, you have to do some number of calculations, call it N. If you adjust one of the weights, then in order to calculate the new error score, you need to run each of the test inputs through the network again, for N x M calculations.

If you’ve got millions of weights, and millions of test inputs, that’s trillions of calculations for each step of training. And you will likely do many steps of optimization to get the net trained. The key point here is that while running a neural network takes a lot of computational resources, training one is orders of magnitude more work.

How does training adjust the weights?

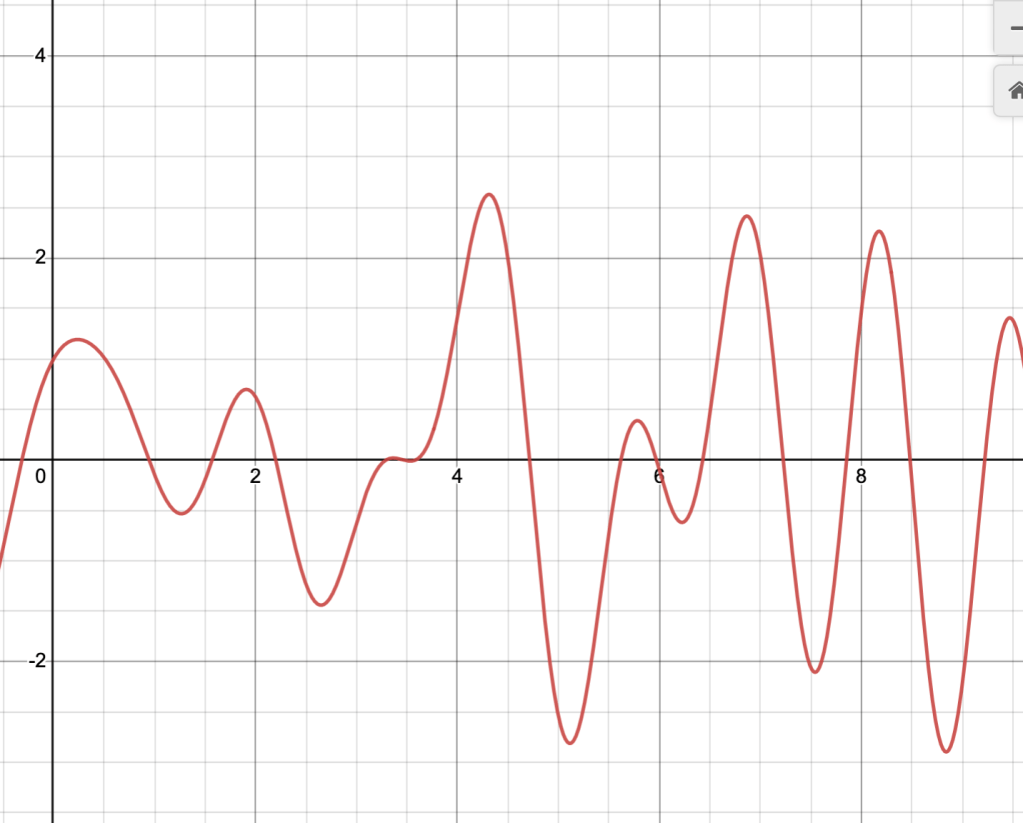

I am not going to go into this into any great detail unless someone asks for it, because it’s very math-y. It’s very similar in principle to finding the lowest value of a simple mathematical function:

The big difference is that in the case of network training, instead of a 2D graph, it’s a million-dimensional graph, which is a lot harder to visualize. But the basic principle applies – start at a random point on the graph, and move around until you find a “lower” spot. Use some clever techniques to ensure you don’t get stuck in a “local minimum”, like the one at x = 6.25 in the graph above.

What are some common issues with training?

The training process is very sensitive to errors in preparing the training data and in evaluating the results. Some common problems you see in practice are:

Over-fitting

If the net is sufficiently large, and the training data is sufficiently small (or largely repetitive), you can get a net which produces perfect results for the input data, but utterly fails at producing valid output for anything outside the training set.

And this is a key thing – a neural net will always produce some output, for a given input. Depending on the design of the network, and especially any post-processing being done, you might get an output telling you the level of confidence it has in the results, but then again, you might not.

Optimizing for the wrong thing

This is sort of a more generic version of the over-fitting problem. The training process tries to minimize the overall error for a set of data. It doesn’t actually care in any way about what all of the internal state of the network represents, it’s just trying to produce the “correct” outputs for the given inputs.

A widely-circulated anecdote of this dates from several years ago, when an attempt was being made to train an anti-tank missile to detect enemy tanks and lock on to them. As it happens, the training data only included images of “tanks” in high light conditions, but the “not a tank” images were taken in a variety of lighting conditions. The end result was that anything vaguely box-like that was well-illuminated was determined to be a tank. Not at all what the designers had in mind.

This tendency for AI training to optimize for “the wrong thing” crops up over and over in the research. One experiment was using AI to build simulated robots with the “fastest” performance for a simulated task. What ended up happening was that the AI came up with a design that exploited logic bugs in the simulation to move faster than the rules should have allowed.

Training from biased samples

An argument you’ll occasionally see given for implementing AI in decision-making processes is that it will eliminate human biases from the system. Well, okay – but where do you get the “correct” outputs for a training set? By either consulting the human experts, or by taking historical decisions as “correct”. In that case, what you’re actually doing is codifying the existing biases of the system, except that you’re doing it in a way which is fundamentally opaque, and adds a veneer of impartiality.

An AI doesn’t have to be inherently racist to perpetuate racism. It just has to be trained on a set of data that has not been examined for racial bias in the first place. Even for something as simple as the “background blur” in Google Meet, Zoom, and the like. Early versions of those systems did not do well at all with darker skin tones, since they’d been trained almost exclusively on White American faces at first.

Oversight failure

If you’re depending on humans to identify bad outputs, in order to correct the AI when it makes mistakes, then you run into a number of problems, including that the single variable “bad” or “good” isn’t enough to navigate the multiple, possibly conflicting goals of any given AI project.

I found a pretty great exploration of the depth of the issue with human “correction” of AI here, and it’s worth reading to see some of the ways in which this is an extremely tricky and limited technique.

This is to say nothing of the fact that a lot of AI training verification is done via low-cost labor markets like Amazon’s Mechanical Turk, which are, to be frank, not optimizing for ethical expertise, just for throughput and cost.

How does this lead to art?

Now that you know enough about how AI works to really understand what happening inside the black box, you might be wondering how systems like Midjourney can make “original” artworks, how ChatGPT can write a song, or how Lensa can make a selfie of you as a Unicorn Princess.

Leave a comment